Wonjun Bang

Affiliations: Networked Computing Lab

Systems for ML, Optimized LLM Inference/Serving, Model Quantization

Building 301, Room 1151-2

Seoul National University

wjbang[at]snu[dot]ac[dot]kr

Hi! My name is Wonjun Bang, an enthusiastic researcher with a deep interest in systems for machine learning. I am fascinated by how well-designed software systems can fully exploit the underlying hardware to serve high-demand, resource-intensive applications such as Machine Learning.

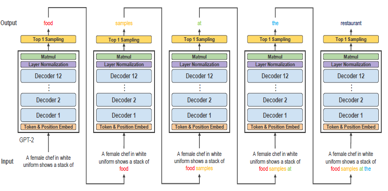

Recently, my research has focused on enabling efficient inference of Large Language Models (LLMs) across various hardware platforms — from edge devices to data-center-scale servers.

Beyond that, I am also interested in optimizing the serving of resource-intensive AI models by intelligently leveraging their internal characteristics, such as activations and KV caches.

You can check out some of my works and publications below.

If you have any questions about me or my work, feel free to ask!

I always welcome discussions with people who share common interests. 😊